2016-05-06 11:23:48 +02:00

#!/usr/bin/env python3

2020-12-31 18:50:11 +01:00

# Copyright (c) 2014-2020 The Bitcoin Core developers

2023-12-31 01:00:00 +01:00

# Copyright (c) 2014-2024 The Dash Core developers

2014-10-23 03:48:19 +02:00

# Distributed under the MIT software license, see the accompanying

2014-07-08 18:07:23 +02:00

# file COPYING or http://www.opensource.org/licenses/mit-license.php.

2019-01-07 10:55:35 +01:00

""" Base class for RPC testing. """

2017-11-29 19:21:51 +01:00

2018-04-25 15:54:36 +02:00

import configparser

2020-01-04 12:22:41 +01:00

import copy

2020-10-17 17:57:07 +02:00

from _decimal import Decimal , ROUND_DOWN

2017-06-02 11:32:55 +02:00

from enum import Enum

2021-07-04 00:41:23 +02:00

import argparse

2020-05-01 20:57:45 +02:00

import logging

2014-07-08 18:07:23 +02:00

import os

2024-08-25 16:17:32 +02:00

import platform

2017-08-11 18:09:51 +02:00

import pdb

2019-05-14 15:00:55 +02:00

import random

2020-05-01 20:57:45 +02:00

import re

2014-07-08 18:07:23 +02:00

import shutil

2019-12-04 19:22:23 +01:00

import subprocess

2017-05-07 15:13:29 +02:00

import sys

2014-07-08 18:07:23 +02:00

import tempfile

2017-03-22 13:03:26 +01:00

import time

2019-01-23 17:36:51 +01:00

from concurrent . futures import ThreadPoolExecutor

2014-07-08 18:07:23 +02:00

2021-01-31 09:28:17 +01:00

from typing import List

2021-02-25 09:48:28 +01:00

from . address import ADDRESS_BCRT1_P2SH_OP_TRUE

2017-06-29 17:37:19 +02:00

from . authproxy import JSONRPCException

2019-02-15 14:56:43 +01:00

from test_framework . blocktools import TIME_GENESIS_BLOCK

2017-06-29 17:37:19 +02:00

from . import coverage

2020-12-09 20:52:11 +01:00

from . messages import (

hash256 ,

2021-10-05 19:42:34 +02:00

msg_isdlock ,

2020-12-09 20:52:11 +01:00

ser_compact_size ,

ser_string ,

2021-06-24 12:47:04 +02:00

tx_from_hex ,

2020-12-09 20:52:11 +01:00

)

2023-02-14 19:48:33 +01:00

from . script import hash160

2024-01-15 20:35:29 +01:00

from . p2p import NetworkThread

2017-08-15 23:34:07 +02:00

from . test_node import TestNode

2015-10-11 07:41:19 +02:00

from . util import (

2017-05-07 15:13:29 +02:00

PortSeed ,

MAX_NODES ,

2019-06-20 18:37:09 +02:00

assert_equal ,

2017-05-07 15:13:29 +02:00

check_json_precision ,

2019-06-20 18:37:09 +02:00

copy_datadir ,

2019-10-09 18:48:12 +02:00

force_finish_mnsync ,

2018-03-14 14:18:44 +01:00

get_datadir_path ,

2017-05-07 15:13:29 +02:00

initialize_datadir ,

p2p_port ,

set_node_times ,

2019-06-20 18:37:09 +02:00

satoshi_round ,

2019-08-15 22:02:02 +02:00

softfork_active ,

2020-09-03 05:47:08 +02:00

wait_until_helper ,

2023-02-14 19:48:33 +01:00

get_chain_folder , rpc_port ,

2019-10-01 16:14:26 +02:00

)

2014-07-08 18:07:23 +02:00

2019-02-25 17:44:18 +01:00

2017-06-02 11:32:55 +02:00

class TestStatus ( Enum ) :

PASSED = 1

FAILED = 2

SKIPPED = 3

TEST_EXIT_PASSED = 0

TEST_EXIT_FAILED = 1

TEST_EXIT_SKIPPED = 77

2018-11-30 16:30:24 +01:00

TMPDIR_PREFIX = " dash_func_test_ "

2019-08-09 01:14:11 +02:00

2018-09-13 12:33:15 +02:00

class SkipTest ( Exception ) :

""" This exception is raised to skip a test """

def __init__ ( self , message ) :

self . message = message

2021-04-08 22:52:05 +02:00

class BitcoinTestMetaClass ( type ) :

""" Metaclass for BitcoinTestFramework.

Ensures that any attempt to register a subclass of ` BitcoinTestFramework `

adheres to a standard whereby the subclass overrides ` set_test_params ` and

` run_test ` but DOES NOT override either ` __init__ ` or ` main ` . If any of

those standards are violated , a ` ` TypeError ` ` is raised . """

def __new__ ( cls , clsname , bases , dct ) :

if not clsname == ' BitcoinTestFramework ' :

if not ( ' run_test ' in dct and ' set_test_params ' in dct ) :

raise TypeError ( " BitcoinTestFramework subclasses must override "

" ' run_test ' and ' set_test_params ' " )

if ' __init__ ' in dct or ' main ' in dct :

raise TypeError ( " BitcoinTestFramework subclasses may not override "

" ' __init__ ' or ' main ' " )

return super ( ) . __new__ ( cls , clsname , bases , dct )

class BitcoinTestFramework ( metaclass = BitcoinTestMetaClass ) :

2017-05-07 15:13:29 +02:00

""" Base class for a bitcoin test script.

2017-09-01 18:47:13 +02:00

Individual bitcoin test scripts should subclass this class and override the set_test_params ( ) and run_test ( ) methods .

Individual tests can also override the following methods to customize the test setup :

2017-05-07 15:13:29 +02:00

- add_options ( )

- setup_chain ( )

- setup_network ( )

2017-09-01 18:47:13 +02:00

- setup_nodes ( )

2017-05-07 15:13:29 +02:00

2017-09-01 18:47:13 +02:00

The __init__ ( ) and main ( ) methods should not be overridden .

2017-05-07 15:13:29 +02:00

This class also contains various public and private helper methods . """

2020-06-03 16:01:29 +02:00

chain = None # type: str

setup_clean_chain = None # type: bool

2016-05-20 15:16:51 +02:00

def __init__ ( self ) :

2017-09-01 18:47:13 +02:00

""" Sets test framework defaults. Do not override this method. Instead, override the set_test_params() method """

2021-01-31 09:28:17 +01:00

self . chain : str = ' regtest '

self . setup_clean_chain : bool = False

2024-08-30 09:47:15 +02:00

self . disable_mocktime : bool = False

2021-01-31 09:28:17 +01:00

self . nodes : List [ TestNode ] = [ ]

2018-06-29 18:04:25 +02:00

self . network_thread = None

2017-06-29 17:37:19 +02:00

self . mocktime = 0

2018-12-29 20:18:43 +01:00

self . rpc_timeout = 60 # Wait for up to 60 seconds for the RPC server to respond

2019-12-09 19:52:38 +01:00

self . supports_cli = True

2018-03-07 14:51:58 +01:00

self . bind_to_localhost_only = True

2022-11-30 20:23:48 +01:00

self . parse_args ( )

Merge #19077: wallet: Add sqlite as an alternative wallet database and use it for new descriptor wallets

c4a29d0a90b821c443c10891d9326c534d15cf97 Update wallet_multiwallet.py for descriptor and sqlite wallets (Russell Yanofsky)

310b0fde04639b7446efd5c1d2701caa4b991b86 Run dumpwallet for legacy wallets only in wallet_backup.py (Andrew Chow)

6c6639ac9f6e1677da066cf809f9e3fa4d2e7c32 Include sqlite3 in documentation (Andrew Chow)

f023b7cac0eb16d3c1bf40f1f7898b290de4cc73 wallet: Enforce sqlite serialized threading mode (Andrew Chow)

6173269866306058fcb1cc825b9eb681838678ca Set and check the sqlite user version (Andrew Chow)

9d3d2d263c331e3c77b8f0d01ecc9fea0407dd17 Use network magic as sqlite wallet application ID (Andrew Chow)

9af5de3798c49f86f27bb79396e075fb8c1b2381 Use SQLite for descriptor wallets (Andrew Chow)

9b78f3ce8ed1867c37f6b9fff98f74582d44b789 walletutil: Wallets can also be sqlite (Andrew Chow)

ac38a87225be0f1103ff9629d63980550d2f372b Determine wallet file type based on file magic (Andrew Chow)

6045f77003f167bee9a85e2d53f8fc6ff2e297d8 Implement SQLiteDatabase::MakeBatch (Andrew Chow)

727e6b2a4ee5abb7f2dcbc9f7778291908dc28ad Implement SQLiteDatabase::Verify (Andrew Chow)

b4df8fdb19fcded7e6d491ecf0b705cac0ec76a1 Implement SQLiteDatabase::Rewrite (Andrew Chow)

010e3659069e6f97dd7b24483f50ed71042b84b0 Implement SQLiteDatabase::TxnBegin, TxnCommit, and TxnAbort (Andrew Chow)

ac5c1617e7f4273daf24c24da1f6bc5ef5ab2d2b Implement SQLiteDatabase::Backup (Andrew Chow)

f6f9cd6a64842ef23777312f2465e826ca04b886 Implement SQLiteBatch::StartCursor, ReadAtCursor, and CloseCursor (Andrew Chow)

bf90e033f4fe86cfb90492c7e0962278ea3a146d Implement SQLiteBatch::ReadKey, WriteKey, EraseKey, and HasKey (Andrew Chow)

7aa45620e2f2178145a2eca58ccbab3cecff08fb Add SetupSQLStatements (Andrew Chow)

6636a2608a4e5906ee8092d5731595542261e0ad Implement SQLiteBatch::Close (Andrew Chow)

93825352a36456283bf87e39b5888363ee242f21 Implement SQLiteDatabase::Close (Andrew Chow)

a0de83372be83f59015cd3d61af2303b74fb64b5 Implement SQLiteDatabase::Open (Andrew Chow)

3bfa0fe1259280f8c32b41a798c9453b73f89b02 Initialize and Shutdown sqlite3 globals (Andrew Chow)

5a488b3d77326a0d957c1233493061da1b6ec207 Constructors, destructors, and relevant private fields for SQLiteDatabase/Batch (Andrew Chow)

ca8b7e04ab89f99075b093fa248919fd10acbdf7 Implement SQLiteDatabaseVersion (Andrew Chow)

7577b6e1c88a1a7b45ecf5c7f1735bae6f5a82bf Add SQLiteDatabase and SQLiteBatch dummy classes (Andrew Chow)

e87df8258090138d5c22ac46b8602b618620e8a1 Add sqlite to travis and depends (Andrew Chow)

54729f3f4e6765dfded590af5fb28c88331685f8 Add libsqlite3 (Andrew Chow)

Pull request description:

This PR adds a new class `SQLiteDatabase` which is a subclass of `WalletDatabase`. This provides access to a SQLite database that is used to store the wallet records. To keep compatibility with BDB and to complexity of the change down, we don't make use of many SQLite's features. We use it strictly as a key-value store. We create a table `main` which has two columns, `key` and `value` both with the type `blob`.

For new descriptor wallets, we will create a `SQLiteDatabase` instead of a `BerkeleyDatabase`. There is no requirement that all SQLite wallets are descriptor wallets, nor is there a requirement that all descriptor wallets be SQLite wallets. This allows for existing descriptor wallets to work as well as keeping open the option to migrate existing wallets to SQLite.

We keep the name `wallet.dat` for SQLite wallets. We are able to determine which database type to use by searching for specific magic bytes in the `wallet.dat` file. SQLite begins it's files with a null terminated string `SQLite format 3`. BDB has `0x00053162` at byte 12 (note that the byte order of this integer depends on the system endianness). So when we see that there is a `wallet.dat` file that we want to open, we check for the magic bytes to determine which database system to use.

I decided to keep the `wallet.dat` naming to keep things like backup script to continue to function as they won't need to be modified to look for a different file name. It also simplifies a couple of things in the implementation and the tests as `wallet.dat` is something that is specifically being looked for. If we don't want this behavior, then I do have another branch which creates `wallet.sqlite` files instead, but I find that this direction is easier.

ACKs for top commit:

Sjors:

re-utACK c4a29d0a90b821c443c10891d9326c534d15cf97

promag:

Tested ACK c4a29d0a90b821c443c10891d9326c534d15cf97.

fjahr:

reACK c4a29d0a90b821c443c10891d9326c534d15cf97

S3RK:

Re-review ACK c4a29d0a90b821c443c10891d9326c534d15cf97

meshcollider:

re-utACK c4a29d0a90b821c443c10891d9326c534d15cf97

hebasto:

re-ACK c4a29d0a90b821c443c10891d9326c534d15cf97, only rebased since my [previous](https://github.com/bitcoin/bitcoin/pull/19077#pullrequestreview-507743699) review, verified with `git range-diff master d18892dcc c4a29d0a9`.

ryanofsky:

Code review ACK c4a29d0a90b821c443c10891d9326c534d15cf97. I am honestly confused about reasons for locking into `wallet.dat` again when it's so easy now to use a clean format. I assume I'm just very dense, or there's some unstated reason, because the only thing that's been brought up are unrealistic compatibility scenarios (all require actively creating a wallet with non-default descriptor+sqlite option, then trying to using the descriptor+sqlite wallets with old software or scripts and ignoring the results) that we didn't pay attention to with previous PRs like #11687, which did not require any active interfaction.

jonatack:

ACK c4a29d0a90b821c443c10891d9326c534d15cf97, debug builds and test runs after rebase to latest master @ c2c4dbaebd9, some manual testing creating, using, unloading and reloading a few different new sqlite descriptor wallets over several node restarts/shutdowns.

Tree-SHA512: 19145732e5001484947352d3175a660b5102bc6e833f227a55bd41b9b2f4d92737bbed7cead64b75b509decf9e1408cd81c185ab1fb4b90561aee427c4f9751c

2020-10-15 08:20:18 +02:00

self . default_wallet_name = " default_wallet " if self . options . descriptors else " "

2022-11-30 20:23:48 +01:00

self . wallet_data_filename = " wallet.dat "

2020-04-16 11:23:49 +02:00

self . extra_args_from_options = [ ]

2022-11-30 20:23:48 +01:00

# Optional list of wallet names that can be set in set_test_params to

# create and import keys to. If unset, default is len(nodes) *

# [default_wallet_name]. If wallet names are None, wallet creation is

# skipped. If list is truncated, wallet creation is skipped and keys

# are not imported.

self . wallet_names = None

2023-02-14 09:48:28 +01:00

# By default the wallet is not required. Set to true by skip_if_no_wallet().

# When False, we ignore wallet_names regardless of what it is.

self . requires_wallet = False

2017-09-01 18:47:13 +02:00

self . set_test_params ( )

2022-11-30 20:24:02 +01:00

assert self . wallet_names is None or len ( self . wallet_names ) < = self . num_nodes

2023-05-24 19:38:33 +02:00

if self . options . timeout_scale != 1 :

print ( " DEPRECATED: --timeoutscale option is no longer available, please use --timeout-factor instead " )

if self . options . timeout_factor == 1 :

self . options . timeout_factor = self . options . timeout_scale

2020-05-19 02:00:24 +02:00

if self . options . timeout_factor == 0 :

self . options . timeout_factor = 99999

self . rpc_timeout = int ( self . rpc_timeout * self . options . timeout_factor ) # optionally, increase timeout by a factor

2014-07-08 18:07:23 +02:00

def main ( self ) :

2017-09-01 18:47:13 +02:00

""" Main function. This should not be overridden by the subclass test scripts. """

2014-07-08 18:07:23 +02:00

2019-11-04 20:52:51 +01:00

assert hasattr ( self , " num_nodes " ) , " Test must set self.num_nodes in set_test_params() "

try :

self . setup ( )

self . run_test ( )

except JSONRPCException :

self . log . exception ( " JSONRPC error " )

self . success = TestStatus . FAILED

except SkipTest as e :

self . log . warning ( " Test Skipped: %s " % e . message )

self . success = TestStatus . SKIPPED

except AssertionError :

self . log . exception ( " Assertion failed " )

self . success = TestStatus . FAILED

except KeyError :

self . log . exception ( " Key error " )

self . success = TestStatus . FAILED

2019-12-04 19:22:23 +01:00

except subprocess . CalledProcessError as e :

self . log . exception ( " Called Process failed with ' {} ' " . format ( e . output ) )

self . success = TestStatus . FAILED

2019-11-04 20:52:51 +01:00

except Exception :

self . log . exception ( " Unexpected exception caught during testing " )

self . success = TestStatus . FAILED

except KeyboardInterrupt :

self . log . warning ( " Exiting after keyboard interrupt " )

self . success = TestStatus . FAILED

finally :

exit_code = self . shutdown ( )

sys . exit ( exit_code )

def parse_args ( self ) :

2020-05-22 12:29:09 +02:00

previous_releases_path = os . getenv ( " PREVIOUS_RELEASES_DIR " ) or os . getcwd ( ) + " /releases "

2021-07-04 00:41:23 +02:00

parser = argparse . ArgumentParser ( usage = " %(prog)s [options] " )

parser . add_argument ( " --nocleanup " , dest = " nocleanup " , default = False , action = " store_true " ,

help = " Leave dashds and test.* datadir on exit or error " )

parser . add_argument ( " --noshutdown " , dest = " noshutdown " , default = False , action = " store_true " ,

help = " Don ' t stop dashds after the test execution " )

parser . add_argument ( " --cachedir " , dest = " cachedir " , default = os . path . abspath ( os . path . dirname ( os . path . realpath ( __file__ ) ) + " /../../cache " ) ,

help = " Directory for caching pregenerated datadirs (default: %(default)s ) " )

parser . add_argument ( " --tmpdir " , dest = " tmpdir " , help = " Root directory for datadirs " )

parser . add_argument ( " -l " , " --loglevel " , dest = " loglevel " , default = " INFO " ,

help = " log events at this level and higher to the console. Can be set to DEBUG, INFO, WARNING, ERROR or CRITICAL. Passing --loglevel DEBUG will output all logs to console. Note that logs at all levels are always written to the test_framework.log file in the temporary test directory. " )

parser . add_argument ( " --tracerpc " , dest = " trace_rpc " , default = False , action = " store_true " ,

help = " Print out all RPC calls as they are made " )

parser . add_argument ( " --portseed " , dest = " port_seed " , default = os . getpid ( ) , type = int ,

help = " The seed to use for assigning port numbers (default: current process id) " )

2020-05-22 12:29:09 +02:00

parser . add_argument ( " --previous-releases " , dest = " prev_releases " , action = " store_true " ,

default = os . path . isdir ( previous_releases_path ) and bool ( os . listdir ( previous_releases_path ) ) ,

help = " Force test of previous releases (default: %(default)s ) " )

2021-07-04 00:41:23 +02:00

parser . add_argument ( " --coveragedir " , dest = " coveragedir " ,

help = " Write tested RPC commands into this directory " )

parser . add_argument ( " --configfile " , dest = " configfile " ,

default = os . path . abspath ( os . path . dirname ( os . path . realpath ( __file__ ) ) + " /../../config.ini " ) ,

help = " Location of the test framework config file (default: %(default)s ) " )

parser . add_argument ( " --pdbonfailure " , dest = " pdbonfailure " , default = False , action = " store_true " ,

help = " Attach a python debugger if test fails " )

parser . add_argument ( " --usecli " , dest = " usecli " , default = False , action = " store_true " ,

help = " use dash-cli instead of RPC for all commands " )

parser . add_argument ( " --dashd-arg " , dest = " dashd_extra_args " , default = [ ] , action = " append " ,

help = " Pass extra args to all dashd instances " )

parser . add_argument ( " --timeoutscale " , dest = " timeout_scale " , default = 1 , type = int ,

2023-05-24 19:38:33 +02:00

help = argparse . SUPPRESS )

Merge #14519: tests: add utility to easily profile node performance with perf

13782b8ba8 docs: add perf section to developer docs (James O'Beirne)

58180b5fd4 tests: add utility to easily profile node performance with perf (James O'Beirne)

Pull request description:

Adds a context manager to easily (and selectively) profile node performance during functional test execution using `perf`.

While writing some tests, I encountered some odd bitcoind slowness. I wrote up a utility (`TestNode.profile_with_perf`) that generates performance diagnostics for a node by running `perf` during the execution of a particular region of test code.

`perf` usage is detailed in the excellent (and sadly unmerged) https://github.com/bitcoin/bitcoin/pull/12649; all due props to @eklitzke.

### Example

```python

with node.profile_with_perf("large-msgs"):

for i in range(200):

node.p2p.send_message(some_large_msg)

node.p2p.sync_with_ping()

```

This generates a perf data file in the test node's datadir (`/tmp/testtxmpod0y/node0/node-0-TestName-large-msgs.perf.data`).

Running `perf report` generates nice output about where the node spent most of its time while running that part of the test:

```bash

$ perf report -i /tmp/testtxmpod0y/node0/node-0-TestName-large-msgs.perf.data --stdio \

| c++filt \

| less

# To display the perf.data header info, please use --header/--header-only options.

#

#

# Total Lost Samples: 0

#

# Samples: 135 of event 'cycles:pp'

# Event count (approx.): 1458205679493582

#

# Children Self Command Shared Object Symbol

# ........ ........ ............... ................... ........................................................................................................................................................................................................................................................................

#

70.14% 0.00% bitcoin-net bitcoind [.] CNode::ReceiveMsgBytes(char const*, unsigned int, bool&)

|

---CNode::ReceiveMsgBytes(char const*, unsigned int, bool&)

70.14% 0.00% bitcoin-net bitcoind [.] CNetMessage::readData(char const*, unsigned int)

|

---CNetMessage::readData(char const*, unsigned int)

CNode::ReceiveMsgBytes(char const*, unsigned int, bool&)

35.52% 0.00% bitcoin-net bitcoind [.] std::vector<char, zero_after_free_allocator<char> >::_M_fill_insert(__gnu_cxx::__normal_iterator<char*, std::vector<char, zero_after_free_allocator<char> > >, unsigned long, char const&)

|

---std::vector<char, zero_after_free_allocator<char> >::_M_fill_insert(__gnu_cxx::__normal_iterator<char*, std::vector<char, zero_after_free_allocator<char> > >, unsigned long, char const&)

CNetMessage::readData(char const*, unsigned int)

CNode::ReceiveMsgBytes(char const*, unsigned int, bool&)

...

```

Tree-SHA512: 9ac4ceaa88818d5eca00994e8e3c8ad42ae019550d6583972a0a4f7b0c4f61032e3d0c476b4ae58756bc5eb8f8015a19a7fc26c095bd588f31d49a37ed0c6b3e

2019-02-05 23:40:11 +01:00

parser . add_argument ( " --perf " , dest = " perf " , default = False , action = " store_true " ,

help = " profile running nodes with perf for the duration of the test " )

Merge #17633: tests: Add option --valgrind to run the functional tests under Valgrind

5db506ba5943868cc2c845f717508739b7f05714 tests: Add option --valgrind to run nodes under valgrind in the functional tests (practicalswift)

Pull request description:

What is better than fixing bugs? Fixing entire bug classes of course! :)

Add option `--valgrind` to run the functional tests under Valgrind.

Regular functional testing under Valgrind would have caught many of the uninitialized reads we've seen historically.

Let's kill this bug class once and for all: let's never use an uninitialized value ever again. Or at least not one that would be triggered by running the functional tests! :)

My hope is that this addition will make it super-easy to run the functional tests under Valgrind and thus increase the probability of people making use of it :)

Hopefully `test/functional/test_runner.py --valgrind` will become a natural part of the pre-release QA process.

**Usage:**

```

$ test/functional/test_runner.py --help

…

--valgrind run nodes under the valgrind memory error detector:

expect at least a ~10x slowdown, valgrind 3.14 or

later required

```

**Live demo:**

First, let's re-introduce a memory bug by reverting the recent P2P uninitialized read bug fix from PR #17624 ("net: Fix an uninitialized read in ProcessMessage(…, "tx", …) when receiving a transaction we already have").

```

$ git diff

diff --git a/src/consensus/validation.h b/src/consensus/validation.h

index 3401eb64c..940adea33 100644

--- a/src/consensus/validation.h

+++ b/src/consensus/validation.h

@@ -114,7 +114,7 @@ inline ValidationState::~ValidationState() {};

class TxValidationState : public ValidationState {

private:

- TxValidationResult m_result = TxValidationResult::TX_RESULT_UNSET;

+ TxValidationResult m_result;

public:

bool Invalid(TxValidationResult result,

const std::string &reject_reason="",

```

Second, let's test as normal without Valgrind:

```

$ test/functional/p2p_segwit.py -l INFO

2019-11-28T09:30:42.810000Z TestFramework (INFO): Initializing test directory /tmp/bitcoin_func_test__fc8q3qo

…

2019-11-28T09:31:57.187000Z TestFramework (INFO): Subtest: test_non_standard_witness_blinding (Segwit active = True)

…

2019-11-28T09:32:08.265000Z TestFramework (INFO): Tests successful

```

Third, let's test with `--valgrind` and see if the test fail (as we expect) when the unitialized value is used:

```

$ test/functional/p2p_segwit.py -l INFO --valgrind

2019-11-28T09:32:33.018000Z TestFramework (INFO): Initializing test directory /tmp/bitcoin_func_test_gtjecx2l

…

2019-11-28T09:40:36.702000Z TestFramework (INFO): Subtest: test_non_standard_witness_blinding (Segwit active = True)

2019-11-28T09:40:37.813000Z TestFramework (ERROR): Assertion failed

ConnectionRefusedError: [Errno 111] Connection refused

```

ACKs for top commit:

MarcoFalke:

ACK 5db506ba5943868cc2c845f717508739b7f05714

jonatack:

ACK 5db506ba5943868cc2c845f717508739b7f05714

Tree-SHA512: 2eaecacf4da166febad88b2a8ee6d7ac2bcd38d4c1892ca39516b6343e8f8c8814edf5eaf14c90f11a069a0389d24f0713076112ac284de987e72fc5f6cc3795

2019-12-10 19:30:05 +01:00

parser . add_argument ( " --valgrind " , dest = " valgrind " , default = False , action = " store_true " ,

help = " run nodes under the valgrind memory error detector: expect at least a ~10x slowdown, valgrind 3.14 or later required " )

2019-05-14 15:00:55 +02:00

parser . add_argument ( " --randomseed " , type = int ,

help = " set a random seed for deterministically reproducing a previous test run " )

2020-05-19 02:00:24 +02:00

parser . add_argument ( ' --timeout-factor ' , dest = " timeout_factor " , type = float , default = 1.0 , help = ' adjust test timeouts by a factor. Setting it to 0 disables all timeouts ' )

2020-11-02 16:54:06 +01:00

group = parser . add_mutually_exclusive_group ( )

2021-02-05 14:17:04 +01:00

group . add_argument ( " --descriptors " , action = ' store_const ' , const = True ,

2020-11-02 16:54:06 +01:00

help = " Run test using a descriptor wallet " , dest = ' descriptors ' )

2021-02-05 14:17:04 +01:00

group . add_argument ( " --legacy-wallet " , action = ' store_const ' , const = False ,

2020-11-02 16:54:06 +01:00

help = " Run test using legacy wallets " , dest = ' descriptors ' )

2023-02-14 09:59:39 +01:00

2014-07-08 18:07:23 +02:00

self . add_options ( parser )

2023-02-14 09:59:39 +01:00

self . options = parser . parse_args ( )

2020-05-22 12:29:09 +02:00

self . options . previous_releases_path = previous_releases_path

2023-02-14 09:59:39 +01:00

config = configparser . ConfigParser ( )

config . read_file ( open ( self . options . configfile ) )

self . config = config

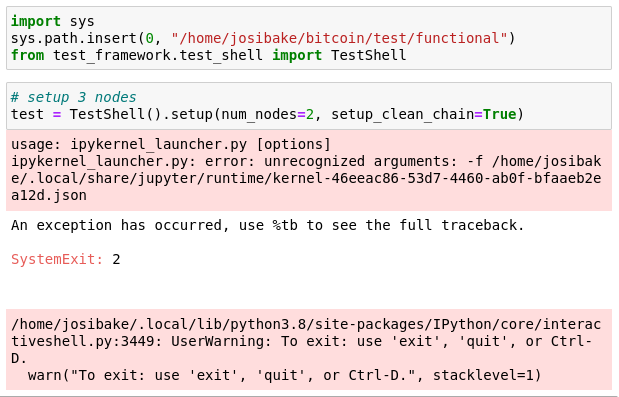

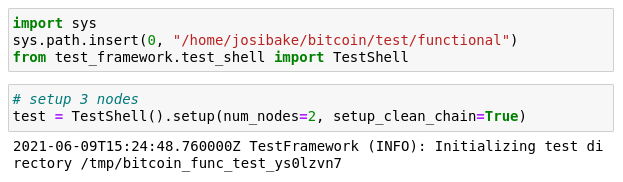

Merge bitcoin/bitcoin#22201: test: Fix TestShell to allow running in Jupyter Notebook

168b6c317ca054c1287c36be532964e861f44266 add dummy file param to fix jupyter (Josiah Baker)

Pull request description:

this fixes argparse to use `parse_known_args`. previously, if an unknown argument was passed, argparse would fail with an `unrecognized arguments: %s` error.

## why

the documentation mentions being able to run `TestShell` in a REPL interpreter or a jupyter notebook. when i tried to run inside a jupyter notebook, i got the following error:

this was due to the notebook passing the filename of the notebook as an argument. this is a known problem with notebooks and argparse, documented here: https://stackoverflow.com/questions/48796169/how-to-fix-ipykernel-launcher-py-error-unrecognized-arguments-in-jupyter

## testing

to test, make sure you have jupyter notebooks installed. you can do this by running:

```

pip install notebook

```

or following instructions from [here](https://jupyterlab.readthedocs.io/en/stable/getting_started/installation.html).

once installed, start a notebook (`jupyter notebook`), launch a python3 kernel and run the following snippet:

```python

import sys

# make sure this is the path for your system

sys.path.insert(0, "/path/to/bitcoin/test/functional")

from test_framework.test_shell import TestShell

test = TestShell().setup(num_nodes=2, setup_clean_chain=True)

```

you should see the following output, without errors:

if you are unfamiliar with notebooks, here is a short guide on using them: https://jupyter.readthedocs.io/en/latest/running.html

ACKs for top commit:

MarcoFalke:

review ACK 168b6c317ca054c1287c36be532964e861f44266

jamesob:

crACK https://github.com/bitcoin/bitcoin/pull/22201/commits/168b6c317ca054c1287c36be532964e861f44266

practicalswift:

cr ACK 168b6c317ca054c1287c36be532964e861f44266

Tree-SHA512: 4fee1563bf64a1cf9009934182412446cde03badf2f19553b78ad2cb3ceb0e5e085a5db41ed440473494ac047f04641311ecbba3948761c6553d0ca4b54937b4

2021-06-22 08:11:14 +02:00

# Running TestShell in a Jupyter notebook causes an additional -f argument

# To keep TestShell from failing with an "unrecognized argument" error, we add a dummy "-f" argument

# source: https://stackoverflow.com/questions/48796169/how-to-fix-ipykernel-launcher-py-error-unrecognized-arguments-in-jupyter/56349168#56349168

parser . add_argument ( " -f " , " --fff " , help = " a dummy argument to fool ipython " , default = " 1 " )

2014-07-08 18:07:23 +02:00

2021-02-05 14:17:04 +01:00

if self . options . descriptors is None :

# Prefer BDB unless it isn't available

if self . is_bdb_compiled ( ) :

self . options . descriptors = False

elif self . is_sqlite_compiled ( ) :

self . options . descriptors = True

else :

# If neither are compiled, tests requiring a wallet will be skipped and the value of self.options.descriptors won't matter

# It still needs to exist and be None in order for tests to work however.

self . options . descriptors = None

2022-06-10 12:37:19 +02:00

PortSeed . n = self . options . port_seed

2019-11-04 20:52:51 +01:00

def setup ( self ) :

""" Call this method to start up the test framework object with options set. """

2014-07-08 18:07:23 +02:00

check_json_precision ( )

2017-10-18 16:52:44 +02:00

self . options . cachedir = os . path . abspath ( self . options . cachedir )

2023-02-14 09:59:39 +01:00

config = self . config

2020-05-16 12:16:45 +02:00

fname_bitcoind = os . path . join (

config [ " environment " ] [ " BUILDDIR " ] ,

" src " ,

2020-05-22 12:29:09 +02:00

" dashd " + config [ " environment " ] [ " EXEEXT " ] ,

2020-05-16 12:16:45 +02:00

)

fname_bitcoincli = os . path . join (

config [ " environment " ] [ " BUILDDIR " ] ,

" src " ,

2020-05-22 12:29:09 +02:00

" dash-cli " + config [ " environment " ] [ " EXEEXT " ] ,

2020-05-16 12:16:45 +02:00

)

self . options . bitcoind = os . getenv ( " BITCOIND " , default = fname_bitcoind )

self . options . bitcoincli = os . getenv ( " BITCOINCLI " , default = fname_bitcoincli )

2018-04-25 15:54:36 +02:00

2020-04-16 11:23:49 +02:00

self . extra_args_from_options = self . options . dashd_extra_args

2018-06-01 10:56:53 +02:00

os . environ [ ' PATH ' ] = os . pathsep . join ( [

os . path . join ( config [ ' environment ' ] [ ' BUILDDIR ' ] , ' src ' ) ,

2020-05-01 20:57:45 +02:00

os . path . join ( config [ ' environment ' ] [ ' BUILDDIR ' ] , ' src ' , ' qt ' ) , os . environ [ ' PATH ' ]

2018-06-01 10:56:53 +02:00

] )

2018-05-09 10:41:40 +02:00

2017-03-09 21:16:20 +01:00

# Set up temp directory and start logging

2017-05-22 08:59:11 +02:00

if self . options . tmpdir :

2017-10-18 16:52:44 +02:00

self . options . tmpdir = os . path . abspath ( self . options . tmpdir )

2017-05-22 08:59:11 +02:00

os . makedirs ( self . options . tmpdir , exist_ok = False )

else :

2018-11-30 16:30:24 +01:00

self . options . tmpdir = tempfile . mkdtemp ( prefix = TMPDIR_PREFIX )

2017-03-09 21:16:20 +01:00

self . _start_logging ( )

2019-05-14 15:00:55 +02:00

# Seed the PRNG. Note that test runs are reproducible if and only if

# a single thread accesses the PRNG. For more information, see

# https://docs.python.org/3/library/random.html#notes-on-reproducibility.

# The network thread shouldn't access random. If we need to change the

# network thread to access randomness, it should instantiate its own

# random.Random object.

seed = self . options . randomseed

if seed is None :

seed = random . randrange ( sys . maxsize )

else :

self . log . debug ( " User supplied random seed {} " . format ( seed ) )

random . seed ( seed )

self . log . debug ( " PRNG seed is: {} " . format ( seed ) )

2018-06-29 18:04:25 +02:00

self . log . debug ( ' Setting up network thread ' )

self . network_thread = NetworkThread ( )

self . network_thread . start ( )

2019-11-04 20:52:51 +01:00

if self . options . usecli :

if not self . supports_cli :

raise SkipTest ( " --usecli specified but test does not support using CLI " )

self . skip_if_no_cli ( )

self . skip_test_if_missing_module ( )

self . setup_chain ( )

self . setup_network ( )

2017-03-09 21:16:20 +01:00

2019-11-04 20:52:51 +01:00

self . success = TestStatus . PASSED

2014-07-08 18:07:23 +02:00

2019-11-04 20:52:51 +01:00

def shutdown ( self ) :

""" Call this method to shut down the test framework object. """

if self . success == TestStatus . FAILED and self . options . pdbonfailure :

2017-08-11 18:09:51 +02:00

print ( " Testcase failed. Attaching python debugger. Enter ? for help " )

pdb . set_trace ( )

2018-06-29 18:04:25 +02:00

self . log . debug ( ' Closing down network thread ' )

self . network_thread . close ( )

2015-04-23 14:19:00 +02:00

if not self . options . noshutdown :

2017-03-09 21:16:20 +01:00

self . log . info ( " Stopping nodes " )

2019-02-21 19:37:16 +01:00

try :

2017-06-02 11:32:55 +02:00

if self . nodes :

self . stop_nodes ( )

2020-09-27 07:43:00 +02:00

except BaseException :

2019-11-04 20:52:51 +01:00

self . success = TestStatus . FAILED

2019-03-08 09:05:00 +01:00

self . log . exception ( " Unexpected exception caught during shutdown " )

2015-04-23 14:19:00 +02:00

else :

2018-04-08 17:05:44 +02:00

for node in self . nodes :

node . cleanup_on_exit = False

2017-03-09 21:16:20 +01:00

self . log . info ( " Note: dashds were not stopped and may still be running " )

2015-04-20 11:50:33 +02:00

Merge #14519: tests: add utility to easily profile node performance with perf

13782b8ba8 docs: add perf section to developer docs (James O'Beirne)

58180b5fd4 tests: add utility to easily profile node performance with perf (James O'Beirne)

Pull request description:

Adds a context manager to easily (and selectively) profile node performance during functional test execution using `perf`.

While writing some tests, I encountered some odd bitcoind slowness. I wrote up a utility (`TestNode.profile_with_perf`) that generates performance diagnostics for a node by running `perf` during the execution of a particular region of test code.

`perf` usage is detailed in the excellent (and sadly unmerged) https://github.com/bitcoin/bitcoin/pull/12649; all due props to @eklitzke.

### Example

```python

with node.profile_with_perf("large-msgs"):

for i in range(200):

node.p2p.send_message(some_large_msg)

node.p2p.sync_with_ping()

```

This generates a perf data file in the test node's datadir (`/tmp/testtxmpod0y/node0/node-0-TestName-large-msgs.perf.data`).

Running `perf report` generates nice output about where the node spent most of its time while running that part of the test:

```bash

$ perf report -i /tmp/testtxmpod0y/node0/node-0-TestName-large-msgs.perf.data --stdio \

| c++filt \

| less

# To display the perf.data header info, please use --header/--header-only options.

#

#

# Total Lost Samples: 0

#

# Samples: 135 of event 'cycles:pp'

# Event count (approx.): 1458205679493582

#

# Children Self Command Shared Object Symbol

# ........ ........ ............... ................... ........................................................................................................................................................................................................................................................................

#

70.14% 0.00% bitcoin-net bitcoind [.] CNode::ReceiveMsgBytes(char const*, unsigned int, bool&)

|

---CNode::ReceiveMsgBytes(char const*, unsigned int, bool&)

70.14% 0.00% bitcoin-net bitcoind [.] CNetMessage::readData(char const*, unsigned int)

|

---CNetMessage::readData(char const*, unsigned int)

CNode::ReceiveMsgBytes(char const*, unsigned int, bool&)

35.52% 0.00% bitcoin-net bitcoind [.] std::vector<char, zero_after_free_allocator<char> >::_M_fill_insert(__gnu_cxx::__normal_iterator<char*, std::vector<char, zero_after_free_allocator<char> > >, unsigned long, char const&)

|

---std::vector<char, zero_after_free_allocator<char> >::_M_fill_insert(__gnu_cxx::__normal_iterator<char*, std::vector<char, zero_after_free_allocator<char> > >, unsigned long, char const&)

CNetMessage::readData(char const*, unsigned int)

CNode::ReceiveMsgBytes(char const*, unsigned int, bool&)

...

```

Tree-SHA512: 9ac4ceaa88818d5eca00994e8e3c8ad42ae019550d6583972a0a4f7b0c4f61032e3d0c476b4ae58756bc5eb8f8015a19a7fc26c095bd588f31d49a37ed0c6b3e

2019-02-05 23:40:11 +01:00

should_clean_up = (

not self . options . nocleanup and

not self . options . noshutdown and

2019-11-04 20:52:51 +01:00

self . success != TestStatus . FAILED and

Merge #14519: tests: add utility to easily profile node performance with perf

13782b8ba8 docs: add perf section to developer docs (James O'Beirne)

58180b5fd4 tests: add utility to easily profile node performance with perf (James O'Beirne)

Pull request description:

Adds a context manager to easily (and selectively) profile node performance during functional test execution using `perf`.

While writing some tests, I encountered some odd bitcoind slowness. I wrote up a utility (`TestNode.profile_with_perf`) that generates performance diagnostics for a node by running `perf` during the execution of a particular region of test code.

`perf` usage is detailed in the excellent (and sadly unmerged) https://github.com/bitcoin/bitcoin/pull/12649; all due props to @eklitzke.

### Example

```python

with node.profile_with_perf("large-msgs"):

for i in range(200):

node.p2p.send_message(some_large_msg)

node.p2p.sync_with_ping()

```

This generates a perf data file in the test node's datadir (`/tmp/testtxmpod0y/node0/node-0-TestName-large-msgs.perf.data`).

Running `perf report` generates nice output about where the node spent most of its time while running that part of the test:

```bash

$ perf report -i /tmp/testtxmpod0y/node0/node-0-TestName-large-msgs.perf.data --stdio \

| c++filt \

| less

# To display the perf.data header info, please use --header/--header-only options.

#

#

# Total Lost Samples: 0

#

# Samples: 135 of event 'cycles:pp'

# Event count (approx.): 1458205679493582

#

# Children Self Command Shared Object Symbol

# ........ ........ ............... ................... ........................................................................................................................................................................................................................................................................

#

70.14% 0.00% bitcoin-net bitcoind [.] CNode::ReceiveMsgBytes(char const*, unsigned int, bool&)

|

---CNode::ReceiveMsgBytes(char const*, unsigned int, bool&)

70.14% 0.00% bitcoin-net bitcoind [.] CNetMessage::readData(char const*, unsigned int)

|

---CNetMessage::readData(char const*, unsigned int)

CNode::ReceiveMsgBytes(char const*, unsigned int, bool&)

35.52% 0.00% bitcoin-net bitcoind [.] std::vector<char, zero_after_free_allocator<char> >::_M_fill_insert(__gnu_cxx::__normal_iterator<char*, std::vector<char, zero_after_free_allocator<char> > >, unsigned long, char const&)

|

---std::vector<char, zero_after_free_allocator<char> >::_M_fill_insert(__gnu_cxx::__normal_iterator<char*, std::vector<char, zero_after_free_allocator<char> > >, unsigned long, char const&)

CNetMessage::readData(char const*, unsigned int)

CNode::ReceiveMsgBytes(char const*, unsigned int, bool&)

...

```

Tree-SHA512: 9ac4ceaa88818d5eca00994e8e3c8ad42ae019550d6583972a0a4f7b0c4f61032e3d0c476b4ae58756bc5eb8f8015a19a7fc26c095bd588f31d49a37ed0c6b3e

2019-02-05 23:40:11 +01:00

not self . options . perf

)

if should_clean_up :

2018-02-12 11:30:17 +01:00

self . log . info ( " Cleaning up {} on exit " . format ( self . options . tmpdir ) )

cleanup_tree_on_exit = True

Merge #14519: tests: add utility to easily profile node performance with perf

13782b8ba8 docs: add perf section to developer docs (James O'Beirne)

58180b5fd4 tests: add utility to easily profile node performance with perf (James O'Beirne)

Pull request description:

Adds a context manager to easily (and selectively) profile node performance during functional test execution using `perf`.

While writing some tests, I encountered some odd bitcoind slowness. I wrote up a utility (`TestNode.profile_with_perf`) that generates performance diagnostics for a node by running `perf` during the execution of a particular region of test code.

`perf` usage is detailed in the excellent (and sadly unmerged) https://github.com/bitcoin/bitcoin/pull/12649; all due props to @eklitzke.

### Example

```python

with node.profile_with_perf("large-msgs"):

for i in range(200):

node.p2p.send_message(some_large_msg)

node.p2p.sync_with_ping()

```

This generates a perf data file in the test node's datadir (`/tmp/testtxmpod0y/node0/node-0-TestName-large-msgs.perf.data`).

Running `perf report` generates nice output about where the node spent most of its time while running that part of the test:

```bash

$ perf report -i /tmp/testtxmpod0y/node0/node-0-TestName-large-msgs.perf.data --stdio \

| c++filt \

| less

# To display the perf.data header info, please use --header/--header-only options.

#

#

# Total Lost Samples: 0

#

# Samples: 135 of event 'cycles:pp'

# Event count (approx.): 1458205679493582

#

# Children Self Command Shared Object Symbol

# ........ ........ ............... ................... ........................................................................................................................................................................................................................................................................

#

70.14% 0.00% bitcoin-net bitcoind [.] CNode::ReceiveMsgBytes(char const*, unsigned int, bool&)

|

---CNode::ReceiveMsgBytes(char const*, unsigned int, bool&)

70.14% 0.00% bitcoin-net bitcoind [.] CNetMessage::readData(char const*, unsigned int)

|

---CNetMessage::readData(char const*, unsigned int)

CNode::ReceiveMsgBytes(char const*, unsigned int, bool&)

35.52% 0.00% bitcoin-net bitcoind [.] std::vector<char, zero_after_free_allocator<char> >::_M_fill_insert(__gnu_cxx::__normal_iterator<char*, std::vector<char, zero_after_free_allocator<char> > >, unsigned long, char const&)

|

---std::vector<char, zero_after_free_allocator<char> >::_M_fill_insert(__gnu_cxx::__normal_iterator<char*, std::vector<char, zero_after_free_allocator<char> > >, unsigned long, char const&)

CNetMessage::readData(char const*, unsigned int)

CNode::ReceiveMsgBytes(char const*, unsigned int, bool&)

...

```

Tree-SHA512: 9ac4ceaa88818d5eca00994e8e3c8ad42ae019550d6583972a0a4f7b0c4f61032e3d0c476b4ae58756bc5eb8f8015a19a7fc26c095bd588f31d49a37ed0c6b3e

2019-02-05 23:40:11 +01:00

elif self . options . perf :

self . log . warning ( " Not cleaning up dir {} due to perf data " . format ( self . options . tmpdir ) )

cleanup_tree_on_exit = False

2016-05-25 11:52:25 +02:00

else :

Merge #14519: tests: add utility to easily profile node performance with perf

13782b8ba8 docs: add perf section to developer docs (James O'Beirne)

58180b5fd4 tests: add utility to easily profile node performance with perf (James O'Beirne)

Pull request description:

Adds a context manager to easily (and selectively) profile node performance during functional test execution using `perf`.

While writing some tests, I encountered some odd bitcoind slowness. I wrote up a utility (`TestNode.profile_with_perf`) that generates performance diagnostics for a node by running `perf` during the execution of a particular region of test code.

`perf` usage is detailed in the excellent (and sadly unmerged) https://github.com/bitcoin/bitcoin/pull/12649; all due props to @eklitzke.

### Example

```python

with node.profile_with_perf("large-msgs"):

for i in range(200):

node.p2p.send_message(some_large_msg)

node.p2p.sync_with_ping()

```

This generates a perf data file in the test node's datadir (`/tmp/testtxmpod0y/node0/node-0-TestName-large-msgs.perf.data`).

Running `perf report` generates nice output about where the node spent most of its time while running that part of the test:

```bash

$ perf report -i /tmp/testtxmpod0y/node0/node-0-TestName-large-msgs.perf.data --stdio \

| c++filt \

| less

# To display the perf.data header info, please use --header/--header-only options.

#

#

# Total Lost Samples: 0

#

# Samples: 135 of event 'cycles:pp'

# Event count (approx.): 1458205679493582

#

# Children Self Command Shared Object Symbol

# ........ ........ ............... ................... ........................................................................................................................................................................................................................................................................

#

70.14% 0.00% bitcoin-net bitcoind [.] CNode::ReceiveMsgBytes(char const*, unsigned int, bool&)

|

---CNode::ReceiveMsgBytes(char const*, unsigned int, bool&)

70.14% 0.00% bitcoin-net bitcoind [.] CNetMessage::readData(char const*, unsigned int)

|

---CNetMessage::readData(char const*, unsigned int)

CNode::ReceiveMsgBytes(char const*, unsigned int, bool&)

35.52% 0.00% bitcoin-net bitcoind [.] std::vector<char, zero_after_free_allocator<char> >::_M_fill_insert(__gnu_cxx::__normal_iterator<char*, std::vector<char, zero_after_free_allocator<char> > >, unsigned long, char const&)

|

---std::vector<char, zero_after_free_allocator<char> >::_M_fill_insert(__gnu_cxx::__normal_iterator<char*, std::vector<char, zero_after_free_allocator<char> > >, unsigned long, char const&)

CNetMessage::readData(char const*, unsigned int)

CNode::ReceiveMsgBytes(char const*, unsigned int, bool&)

...

```

Tree-SHA512: 9ac4ceaa88818d5eca00994e8e3c8ad42ae019550d6583972a0a4f7b0c4f61032e3d0c476b4ae58756bc5eb8f8015a19a7fc26c095bd588f31d49a37ed0c6b3e

2019-02-05 23:40:11 +01:00

self . log . warning ( " Not cleaning up dir {} " . format ( self . options . tmpdir ) )

2018-02-12 11:30:17 +01:00

cleanup_tree_on_exit = False

2017-06-02 11:32:55 +02:00

2019-11-04 20:52:51 +01:00

if self . success == TestStatus . PASSED :

2017-03-09 21:16:20 +01:00

self . log . info ( " Tests successful " )

2017-11-29 19:21:51 +01:00

exit_code = TEST_EXIT_PASSED

2019-11-04 20:52:51 +01:00

elif self . success == TestStatus . SKIPPED :

2017-06-02 11:32:55 +02:00

self . log . info ( " Test skipped " )

2017-11-29 19:21:51 +01:00

exit_code = TEST_EXIT_SKIPPED

2014-07-08 18:07:23 +02:00

else :

2017-03-09 21:16:20 +01:00

self . log . error ( " Test failed. Test logging available at %s /test_framework.log " , self . options . tmpdir )

2020-05-30 12:49:24 +02:00

self . log . error ( " " )

2017-11-29 19:21:51 +01:00

self . log . error ( " Hint: Call {} ' {} ' to consolidate all logs " . format ( os . path . normpath ( os . path . dirname ( os . path . realpath ( __file__ ) ) + " /../combine_logs.py " ) , self . options . tmpdir ) )

2020-05-30 12:49:24 +02:00

self . log . error ( " " )

self . log . error ( " If this failure happened unexpectedly or intermittently, please file a bug and provide a link or upload of the combined log. " )

self . log . error ( self . config [ ' environment ' ] [ ' PACKAGE_BUGREPORT ' ] )

self . log . error ( " " )

2017-11-29 19:21:51 +01:00

exit_code = TEST_EXIT_FAILED

2019-11-04 20:52:51 +01:00

# Logging.shutdown will not remove stream- and filehandlers, so we must

# do it explicitly. Handlers are removed so the next test run can apply

# different log handler settings.

# See: https://docs.python.org/3/library/logging.html#logging.shutdown

for h in list ( self . log . handlers ) :

h . flush ( )

h . close ( )

self . log . removeHandler ( h )

rpc_logger = logging . getLogger ( " BitcoinRPC " )

for h in list ( rpc_logger . handlers ) :

h . flush ( )

rpc_logger . removeHandler ( h )

2018-02-12 11:30:17 +01:00

if cleanup_tree_on_exit :

shutil . rmtree ( self . options . tmpdir )

2019-11-04 20:52:51 +01:00

self . nodes . clear ( )

return exit_code

2015-04-28 18:39:47 +02:00

2017-09-01 18:47:13 +02:00

# Methods to override in subclass test scripts.

def set_test_params ( self ) :

2020-12-27 10:42:48 +01:00

""" Tests must override this method to change default values for number of nodes, topology, etc """

2017-09-01 18:47:13 +02:00

raise NotImplementedError

2017-05-07 15:13:29 +02:00

2017-09-01 18:47:13 +02:00

def add_options ( self , parser ) :

""" Override this method to add command-line options to the test """

pass

2017-06-29 17:37:19 +02:00

2018-09-13 12:33:15 +02:00

def skip_test_if_missing_module ( self ) :

""" Override this method to skip a test if a module is not compiled """

pass

2017-09-01 18:47:13 +02:00

def setup_chain ( self ) :

""" Override this method to customize blockchain setup """

self . log . info ( " Initializing test directory " + self . options . tmpdir )

if self . setup_clean_chain :

self . _initialize_chain_clean ( )

else :

self . _initialize_chain ( )

2024-08-30 09:47:15 +02:00

if not self . disable_mocktime :

self . _initialize_mocktime ( is_genesis = self . setup_clean_chain )

2017-06-29 17:37:19 +02:00

2017-09-01 18:47:13 +02:00

def setup_network ( self ) :

""" Override this method to customize test network topology """

self . setup_nodes ( )

# Connect the nodes as a "chain". This allows us

# to split the network between nodes 1 and 2 to get

# two halves that can work on competing chains.

2019-09-18 20:41:14 +02:00

#

# Topology looks like this:

# node0 <-- node1 <-- node2 <-- node3

#

# If all nodes are in IBD (clean chain from genesis), node0 is assumed to be the source of blocks (miner). To

# ensure block propagation, all nodes will establish outgoing connections toward node0.

# See fPreferredDownload in net_processing.

#

# If further outbound connections are needed, they can be added at the beginning of the test with e.g.

2020-06-07 15:54:56 +02:00

# self.connect_nodes(1, 2)

2017-09-01 18:47:13 +02:00

for i in range ( self . num_nodes - 1 ) :

2020-06-07 15:54:56 +02:00

self . connect_nodes ( i + 1 , i )

2017-09-01 18:47:13 +02:00

self . sync_all ( )

def setup_nodes ( self ) :

""" Override this method to customize test node setup """

2024-01-27 18:29:53 +01:00

""" If this method is updated - backport changes to DashTestFramework.setup_nodes """

Merge #16528: Native Descriptor Wallets using DescriptorScriptPubKeyMan

223588b1bbc63dc57098bbd0baa48635e0cc0b82 Add a --descriptors option to various tests (Andrew Chow)

869f7ab30aeb4d7fbd563c535b55467a8a0430cf tests: Add RPCOverloadWrapper which overloads some disabled RPCs (Andrew Chow)

cf060628590fab87d73f278e744d70ef2d5d81db Correctly check for default wallet (Andrew Chow)

886e0d75f5fea2421190aa4812777d89f68962cc Implement CWallet::IsSpentKey for non-LegacySPKMans (Andrew Chow)

3c19fdd2a2fd5394fcfa75b2ba84ab2277cbdabf Return error when no ScriptPubKeyMan is available for specified type (Andrew Chow)

388ba94231f2f10a0be751c562cdd4650510a90a Change wallet_encryption.py to use signmessage instead of dumpprivkey (Andrew Chow)

1346e14831489f9c8f53a08f9dfed61d55d53c6f Functional tests for descriptor wallets (Andrew Chow)

f193ea889ddb53d9a5c47647966681d525e38368 add importdescriptors RPC and tests for native descriptor wallets (Hugo Nguyen)

ce24a944940019185efebcc5d85eac458ed26016 Add IsLegacy to CWallet so that the GUI knows whether to show watchonly (Andrew Chow)

1cb42b22b11c27e64462afc25a94b2fc50bfa113 Generate new descriptors when encrypting (Andrew Chow)

82ae02b1656819f4bd5023b8955447e1d4ea8692 Be able to create new wallets with DescriptorScriptPubKeyMans as backing (Andrew Chow)

b713baa75a62335ab9c0eed9ef76a95bfec30668 Implement GetMetadata in DescriptorScriptPubKeyMan (Andrew Chow)

8b9603bd0b443e2f7984eb72bf2e21cf02af0bcb Change GetMetadata to use unique_ptr<CKeyMetadata> (Andrew Chow)

72a9540df96ffdb94f039b9c14eaacdc7d961196 Implement FillPSBT in DescriptorScriptPubKeyMan (Andrew Chow)

84b4978c02102171775c77a45f6ec198930f0a88 Implement SignMessage for descriptor wallets (Andrew Chow)

bde7c9fa38775a81d53ac0484fa9c98076a0c7d1 Implement SignTransaction in DescriptorScriptPubKeyMan (Andrew Chow)

d50c8ddd4190f20bf0debd410348b73408ec3143 Implement GetSolvingProvider for DescriptorScriptPubKeyMan (Andrew Chow)

f1ca5feb4ad668a3e1ae543d0addd5f483f1a88f Implement GetKeypoolOldestTime and only display it if greater than 0 (Andrew Chow)

586b57a9a6b4b12a78f792785b63a5a1743bce0c Implement ReturnDestination in DescriptorScriptPubKeyMan (Andrew Chow)

f866957979c23cefd41efa9dae9e53b9177818dc Implement GetReservedDestination in DescriptorScriptPubKeyMan (Andrew Chow)

a775f7c7fd0b9094fcbeee6ba92206d5bbb19164 Implement Unlock and Encrypt in DescriptorScriptPubKeyMan (Andrew Chow)

bfdd0734869a22217c15858d7a76d0dacc2ebc86 Implement GetNewDestination for DescriptorScriptPubKeyMan (Andrew Chow)

58c7651821b0eeff0a99dc61d78d2e9e07986580 Implement TopUp in DescriptorScriptPubKeyMan (Andrew Chow)

e014886a342508f7c8d80323eee9a5f314eaf94c Implement SetupGeneration for DescriptorScriptPubKeyMan (Andrew Chow)

46dfb99768e7d03a3cf552812d5b41ceaebc06be Implement writing descriptorkeys, descriptorckeys, and descriptors to wallet file (Andrew Chow)

4cb9b69be031e1dc65d8964794781b347fd948f5 Implement several simple functions in DescriptorScriptPubKeyMan (Andrew Chow)

d1ec3e4f19487b4b100f80ad02eac063c571777d Add IsSingleType to Descriptors (Andrew Chow)

953feb3d2724f5398dd48990c4957a19313d2c8c Implement loading of keys for DescriptorScriptPubKeyMan (Andrew Chow)

2363e9fcaa41b68bf11153f591b95f2d41ff9a1a Load the descriptor cache from the wallet file (Andrew Chow)

46c46aebb7943e1e2e96755e94dc6c197920bf75 Implement GetID for DescriptorScriptPubKeyMan (Andrew Chow)

ec2f9e1178c8e38c0a5ca063fe81adac8f916348 Implement IsHDEnabled in DescriptorScriptPubKeyMan (Andrew Chow)

741122d4c1a62ced3e96d16d67f4eeb3a6522d99 Implement MarkUnusedAddresses in DescriptorScriptPubKeyMan (Andrew Chow)

2db7ca765c8fb2c71dd6f7c4f29ad70e68ff1720 Implement IsMine for DescriptorScriptPubKeyMan (Andrew Chow)

db7177af8c159abbcc209f2caafcd45d54c181c5 Add LoadDescriptorScriptPubKeyMan and SetActiveScriptPubKeyMan to CWallet (Andrew Chow)

78f8a92910d34247fa5d04368338c598d9908267 Implement SetType in DescriptorScriptPubKeyMan (Andrew Chow)

834de0300cde57ca3f662fb7aa5b1bdaed68bc8f Store WalletDescriptor in DescriptorScriptPubKeyMan (Andrew Chow)

d8132669e10c1db9ae0c2ea0d3f822d7d2f01345 Add a lock cs_desc_man for DescriptorScriptPubKeyMan (Andrew Chow)

3194a7f88ac1a32997b390b4f188c4b6a4af04a5 Introduce WalletDescriptor class (Andrew Chow)

6b13cd3fa854dfaeb9e269bff3d67cacc0e5b5dc Create LegacyScriptPubKeyMan when not a descriptor wallet (Andrew Chow)

aeac157c9dc141546b45e06ba9c2e641ad86083f Return nullptr from GetLegacyScriptPubKeyMan if descriptor wallet (Andrew Chow)

96accc73f067c7c95946e9932645dd821ef67f63 Add WALLET_FLAG_DESCRIPTORS (Andrew Chow)

6b8119af53ee2fdb4c4b5b24b4e650c0dc3bd27c Introduce DescriptorScriptPubKeyMan as a dummy class (Andrew Chow)

06620302c713cae65ee8e4ff9302e4c88e2a1285 Introduce SetType function to tell ScriptPubKeyMans the type and internal-ness of it (Andrew Chow)

Pull request description:

Introducing the wallet of the glorious future (again): native descriptor wallets. With native descriptor wallets, addresses are generated from descriptors. Instead of generating keys and deriving addresses from keys, addresses come from the scriptPubKeys produced by a descriptor. Native descriptor wallets will be optional for now and can only be created by using `createwallet`.

Descriptor wallets will store descriptors, master keys from the descriptor, and descriptor cache entries. Keys are derived from descriptors on the fly. In order to allow choosing different address types, 6 descriptors are needed for normal use. There is a pair of primary and change descriptors for each of the 3 address types. With the default keypool size of 1000, each descriptor has 1000 scriptPubKeys and descriptor cache entries pregenerated. This has a side effect of making wallets large since 6000 pubkeys are written to the wallet by default, instead of the current 2000. scriptPubKeys are kept only in memory and are generated every time a descriptor is loaded. By default, we use the standard BIP 44, 49, 84 derivation paths with an external and internal derivation chain for each.

Descriptors can also be imported with a new `importdescriptors` RPC.

Native descriptor wallets use the `ScriptPubKeyMan` interface introduced in #16341 to add a `DescriptorScriptPubKeyMan`. This defines a different IsMine which uses the simpler model of "does this scriptPubKey exist in this wallet". Furthermore, `DescriptorScriptPubKeyMan` does not have watchonly, so with native descriptor wallets, it is not possible to have a wallet with both watchonly and non-watchonly things. Rather a wallet with `disable_private_keys` needs to be used for watchonly things.

A `--descriptor` option was added to some tests (`wallet_basic.py`, `wallet_encryption.py`, `wallet_keypool.py`, `wallet_keypool_topup.py`, and `wallet_labels.py`) to allow for these tests to use descriptor wallets. Additionally, several RPCs are disabled for descriptor wallets (`importprivkey`, `importpubkey`, `importaddress`, `importmulti`, `addmultisigaddress`, `dumpprivkey`, `dumpwallet`, `importwallet`, and `sethdseed`).

ACKs for top commit:

Sjors:

utACK 223588b1bbc63dc57098bbd0baa48635e0cc0b82 (rebased, nits addressed)

jonatack:

Code review re-ACK 223588b1bbc63dc57098bbd0baa48635e0cc0b82.

fjahr:

re-ACK 223588b1bbc63dc57098bbd0baa48635e0cc0b82

instagibbs:

light re-ACK 223588b

meshcollider:

Code review ACK 223588b1bbc63dc57098bbd0baa48635e0cc0b82

Tree-SHA512: 59bc52aeddbb769ed5f420d5d240d8137847ac821b588eb616b34461253510c1717d6a70bab8765631738747336ae06f45ba39603ccd17f483843e5ed9a90986

Introduce SetType function to tell ScriptPubKeyMans the type and internal-ness of it

Introduce DescriptorScriptPubKeyMan as a dummy class

Add WALLET_FLAG_DESCRIPTORS

Return nullptr from GetLegacyScriptPubKeyMan if descriptor wallet

Create LegacyScriptPubKeyMan when not a descriptor wallet

Introduce WalletDescriptor class

WalletDescriptor is a Descriptor with other wallet metadata

Add a lock cs_desc_man for DescriptorScriptPubKeyMan

Store WalletDescriptor in DescriptorScriptPubKeyMan

Implement SetType in DescriptorScriptPubKeyMan

Add LoadDescriptorScriptPubKeyMan and SetActiveScriptPubKeyMan to CWallet

Implement IsMine for DescriptorScriptPubKeyMan

Adds a set of scriptPubKeys that DescriptorScriptPubKeyMan tracks.

If the given script is in that set, it is considered ISMINE_SPENDABLE

Implement MarkUnusedAddresses in DescriptorScriptPubKeyMan

Implement IsHDEnabled in DescriptorScriptPubKeyMan

Implement GetID for DescriptorScriptPubKeyMan

Load the descriptor cache from the wallet file

Implement loading of keys for DescriptorScriptPubKeyMan

Add IsSingleType to Descriptors

IsSingleType will return whether the descriptor will give one or multiple scriptPubKeys

Implement several simple functions in DescriptorScriptPubKeyMan

Implements a bunch of one liners: UpgradeKeyMetadata, IsFirstRun, HavePrivateKeys,

KeypoolCountExternalKeys, GetKeypoolSize, GetTimeFirstKey, CanGetAddresses,

RewriteDB

Implement writing descriptorkeys, descriptorckeys, and descriptors to wallet file

Implement SetupGeneration for DescriptorScriptPubKeyMan

Implement TopUp in DescriptorScriptPubKeyMan

Implement GetNewDestination for DescriptorScriptPubKeyMan

Implement Unlock and Encrypt in DescriptorScriptPubKeyMan

Implement GetReservedDestination in DescriptorScriptPubKeyMan

Implement ReturnDestination in DescriptorScriptPubKeyMan

Implement GetKeypoolOldestTime and only display it if greater than 0

Implement GetSolvingProvider for DescriptorScriptPubKeyMan

Internally, a GetSigningProvider function is introduced which allows for

some private keys to be optionally included. This can be called with a

script as the argument (i.e. a scriptPubKey from our wallet when we are

signing) or with a pubkey. In order to know what index to expand the

private keys for that pubkey, we need to also cache all of the pubkeys

involved when we expand the descriptor. So SetCache and TopUp are

updated to do this too.

Implement SignTransaction in DescriptorScriptPubKeyMan

Implement SignMessage for descriptor wallets

Implement FillPSBT in DescriptorScriptPubKeyMan

FillPSBT will add our own scripts to the PSBT if those inputs are ours.

If an input also lists pubkeys that we happen to know the private keys

for, we will sign those inputs too.

Change GetMetadata to use unique_ptr<CKeyMetadata>

Implement GetMetadata in DescriptorScriptPubKeyMan

Be able to create new wallets with DescriptorScriptPubKeyMans as backing

Generate new descriptors when encrypting

Add IsLegacy to CWallet so that the GUI knows whether to show watchonly

add importdescriptors RPC and tests for native descriptor wallets

Co-authored-by: Andrew Chow <achow101-github@achow101.com>

Functional tests for descriptor wallets

Change wallet_encryption.py to use signmessage instead of dumpprivkey

Return error when no ScriptPubKeyMan is available for specified type

When a CWallet doesn't have a ScriptPubKeyMan for the requested type

in GetNewDestination, give a meaningful error. Also handle this in

Qt which did not do anything with errors.

Implement CWallet::IsSpentKey for non-LegacySPKMans

tests: Add RPCOverloadWrapper which overloads some disabled RPCs

RPCOverloadWrapper overloads some deprecated or disabled RPCs with

an implementation using other RPCs to avoid having a ton of code churn

around replacing those RPCs.

Add a --descriptors option to various tests

Adds a --descriptors option globally to the test framework. This will

make the test create and use descriptor wallets. However some tests may

not work with this.

Some tests are modified to work with --descriptors and run with that

option in test_runer:

* wallet_basic.py

* wallet_encryption.py

* wallet_keypool.py <---- wallet_keypool_hd.py actually

* wallet_keypool_topup.py

* wallet_labels.py

* wallet_avoidreuse.py

2019-07-16 19:34:35 +02:00

extra_args = [ [ ] ] * self . num_nodes

2017-09-01 18:47:13 +02:00

if hasattr ( self , " extra_args " ) :

extra_args = self . extra_args

2021-06-17 19:05:11 +02:00

self . add_nodes ( self . num_nodes , extra_args )

2017-09-01 18:47:13 +02:00

self . start_nodes ( )

2023-02-14 09:48:28 +01:00

if self . requires_wallet :

2022-11-30 20:23:48 +01:00

self . import_deterministic_coinbase_privkeys ( )

2019-02-25 17:44:18 +01:00

if not self . setup_clean_chain :

for n in self . nodes :

assert_equal ( n . getblockchaininfo ( ) [ " blocks " ] , 199 )

# To ensure that all nodes are out of IBD, the most recent block

# must have a timestamp not too old (see IsInitialBlockDownload()).

2024-08-30 09:47:15 +02:00

if not self . disable_mocktime :

self . log . debug ( ' Generate a block with current mocktime ' )

2024-08-30 14:33:15 +02:00

self . bump_mocktime ( 156 * 200 , update_schedulers = False )

2024-09-26 21:17:04 +02:00

block_hash = self . generate ( self . nodes [ 0 ] , 1 , sync_fun = self . no_op ) [ 0 ]

2019-02-25 17:44:18 +01:00

block = self . nodes [ 0 ] . getblock ( blockhash = block_hash , verbosity = 0 )

for n in self . nodes :

n . submitblock ( block )

chain_info = n . getblockchaininfo ( )

assert_equal ( chain_info [ " blocks " ] , 200 )

assert_equal ( chain_info [ " initialblockdownload " ] , False )

2017-09-01 18:47:13 +02:00

2018-09-10 22:58:15 +02:00

def import_deterministic_coinbase_privkeys ( self ) :

2022-11-30 20:24:02 +01:00

for i in range ( len ( self . nodes ) ) :

self . init_wallet ( i )

def init_wallet ( self , i ) :

wallet_name = self . default_wallet_name if self . wallet_names is None else self . wallet_names [ i ] if i < len ( self . wallet_names ) else False

if wallet_name is not False :

n = self . nodes [ i ]

2022-11-30 20:23:48 +01:00

if wallet_name is not None :

Merge #16528: Native Descriptor Wallets using DescriptorScriptPubKeyMan

223588b1bbc63dc57098bbd0baa48635e0cc0b82 Add a --descriptors option to various tests (Andrew Chow)

869f7ab30aeb4d7fbd563c535b55467a8a0430cf tests: Add RPCOverloadWrapper which overloads some disabled RPCs (Andrew Chow)

cf060628590fab87d73f278e744d70ef2d5d81db Correctly check for default wallet (Andrew Chow)

886e0d75f5fea2421190aa4812777d89f68962cc Implement CWallet::IsSpentKey for non-LegacySPKMans (Andrew Chow)

3c19fdd2a2fd5394fcfa75b2ba84ab2277cbdabf Return error when no ScriptPubKeyMan is available for specified type (Andrew Chow)

388ba94231f2f10a0be751c562cdd4650510a90a Change wallet_encryption.py to use signmessage instead of dumpprivkey (Andrew Chow)

1346e14831489f9c8f53a08f9dfed61d55d53c6f Functional tests for descriptor wallets (Andrew Chow)

f193ea889ddb53d9a5c47647966681d525e38368 add importdescriptors RPC and tests for native descriptor wallets (Hugo Nguyen)

ce24a944940019185efebcc5d85eac458ed26016 Add IsLegacy to CWallet so that the GUI knows whether to show watchonly (Andrew Chow)

1cb42b22b11c27e64462afc25a94b2fc50bfa113 Generate new descriptors when encrypting (Andrew Chow)

82ae02b1656819f4bd5023b8955447e1d4ea8692 Be able to create new wallets with DescriptorScriptPubKeyMans as backing (Andrew Chow)

b713baa75a62335ab9c0eed9ef76a95bfec30668 Implement GetMetadata in DescriptorScriptPubKeyMan (Andrew Chow)

8b9603bd0b443e2f7984eb72bf2e21cf02af0bcb Change GetMetadata to use unique_ptr<CKeyMetadata> (Andrew Chow)

72a9540df96ffdb94f039b9c14eaacdc7d961196 Implement FillPSBT in DescriptorScriptPubKeyMan (Andrew Chow)

84b4978c02102171775c77a45f6ec198930f0a88 Implement SignMessage for descriptor wallets (Andrew Chow)

bde7c9fa38775a81d53ac0484fa9c98076a0c7d1 Implement SignTransaction in DescriptorScriptPubKeyMan (Andrew Chow)

d50c8ddd4190f20bf0debd410348b73408ec3143 Implement GetSolvingProvider for DescriptorScriptPubKeyMan (Andrew Chow)

f1ca5feb4ad668a3e1ae543d0addd5f483f1a88f Implement GetKeypoolOldestTime and only display it if greater than 0 (Andrew Chow)

586b57a9a6b4b12a78f792785b63a5a1743bce0c Implement ReturnDestination in DescriptorScriptPubKeyMan (Andrew Chow)

f866957979c23cefd41efa9dae9e53b9177818dc Implement GetReservedDestination in DescriptorScriptPubKeyMan (Andrew Chow)

a775f7c7fd0b9094fcbeee6ba92206d5bbb19164 Implement Unlock and Encrypt in DescriptorScriptPubKeyMan (Andrew Chow)

bfdd0734869a22217c15858d7a76d0dacc2ebc86 Implement GetNewDestination for DescriptorScriptPubKeyMan (Andrew Chow)

58c7651821b0eeff0a99dc61d78d2e9e07986580 Implement TopUp in DescriptorScriptPubKeyMan (Andrew Chow)

e014886a342508f7c8d80323eee9a5f314eaf94c Implement SetupGeneration for DescriptorScriptPubKeyMan (Andrew Chow)

46dfb99768e7d03a3cf552812d5b41ceaebc06be Implement writing descriptorkeys, descriptorckeys, and descriptors to wallet file (Andrew Chow)

4cb9b69be031e1dc65d8964794781b347fd948f5 Implement several simple functions in DescriptorScriptPubKeyMan (Andrew Chow)

d1ec3e4f19487b4b100f80ad02eac063c571777d Add IsSingleType to Descriptors (Andrew Chow)

953feb3d2724f5398dd48990c4957a19313d2c8c Implement loading of keys for DescriptorScriptPubKeyMan (Andrew Chow)

2363e9fcaa41b68bf11153f591b95f2d41ff9a1a Load the descriptor cache from the wallet file (Andrew Chow)

46c46aebb7943e1e2e96755e94dc6c197920bf75 Implement GetID for DescriptorScriptPubKeyMan (Andrew Chow)

ec2f9e1178c8e38c0a5ca063fe81adac8f916348 Implement IsHDEnabled in DescriptorScriptPubKeyMan (Andrew Chow)

741122d4c1a62ced3e96d16d67f4eeb3a6522d99 Implement MarkUnusedAddresses in DescriptorScriptPubKeyMan (Andrew Chow)

2db7ca765c8fb2c71dd6f7c4f29ad70e68ff1720 Implement IsMine for DescriptorScriptPubKeyMan (Andrew Chow)

db7177af8c159abbcc209f2caafcd45d54c181c5 Add LoadDescriptorScriptPubKeyMan and SetActiveScriptPubKeyMan to CWallet (Andrew Chow)

78f8a92910d34247fa5d04368338c598d9908267 Implement SetType in DescriptorScriptPubKeyMan (Andrew Chow)

834de0300cde57ca3f662fb7aa5b1bdaed68bc8f Store WalletDescriptor in DescriptorScriptPubKeyMan (Andrew Chow)

d8132669e10c1db9ae0c2ea0d3f822d7d2f01345 Add a lock cs_desc_man for DescriptorScriptPubKeyMan (Andrew Chow)

3194a7f88ac1a32997b390b4f188c4b6a4af04a5 Introduce WalletDescriptor class (Andrew Chow)

6b13cd3fa854dfaeb9e269bff3d67cacc0e5b5dc Create LegacyScriptPubKeyMan when not a descriptor wallet (Andrew Chow)

aeac157c9dc141546b45e06ba9c2e641ad86083f Return nullptr from GetLegacyScriptPubKeyMan if descriptor wallet (Andrew Chow)

96accc73f067c7c95946e9932645dd821ef67f63 Add WALLET_FLAG_DESCRIPTORS (Andrew Chow)

6b8119af53ee2fdb4c4b5b24b4e650c0dc3bd27c Introduce DescriptorScriptPubKeyMan as a dummy class (Andrew Chow)

06620302c713cae65ee8e4ff9302e4c88e2a1285 Introduce SetType function to tell ScriptPubKeyMans the type and internal-ness of it (Andrew Chow)

Pull request description:

Introducing the wallet of the glorious future (again): native descriptor wallets. With native descriptor wallets, addresses are generated from descriptors. Instead of generating keys and deriving addresses from keys, addresses come from the scriptPubKeys produced by a descriptor. Native descriptor wallets will be optional for now and can only be created by using `createwallet`.

Descriptor wallets will store descriptors, master keys from the descriptor, and descriptor cache entries. Keys are derived from descriptors on the fly. In order to allow choosing different address types, 6 descriptors are needed for normal use. There is a pair of primary and change descriptors for each of the 3 address types. With the default keypool size of 1000, each descriptor has 1000 scriptPubKeys and descriptor cache entries pregenerated. This has a side effect of making wallets large since 6000 pubkeys are written to the wallet by default, instead of the current 2000. scriptPubKeys are kept only in memory and are generated every time a descriptor is loaded. By default, we use the standard BIP 44, 49, 84 derivation paths with an external and internal derivation chain for each.

Descriptors can also be imported with a new `importdescriptors` RPC.

Native descriptor wallets use the `ScriptPubKeyMan` interface introduced in #16341 to add a `DescriptorScriptPubKeyMan`. This defines a different IsMine which uses the simpler model of "does this scriptPubKey exist in this wallet". Furthermore, `DescriptorScriptPubKeyMan` does not have watchonly, so with native descriptor wallets, it is not possible to have a wallet with both watchonly and non-watchonly things. Rather a wallet with `disable_private_keys` needs to be used for watchonly things.

A `--descriptor` option was added to some tests (`wallet_basic.py`, `wallet_encryption.py`, `wallet_keypool.py`, `wallet_keypool_topup.py`, and `wallet_labels.py`) to allow for these tests to use descriptor wallets. Additionally, several RPCs are disabled for descriptor wallets (`importprivkey`, `importpubkey`, `importaddress`, `importmulti`, `addmultisigaddress`, `dumpprivkey`, `dumpwallet`, `importwallet`, and `sethdseed`).

ACKs for top commit:

Sjors:

utACK 223588b1bbc63dc57098bbd0baa48635e0cc0b82 (rebased, nits addressed)

jonatack:

Code review re-ACK 223588b1bbc63dc57098bbd0baa48635e0cc0b82.

fjahr:

re-ACK 223588b1bbc63dc57098bbd0baa48635e0cc0b82

instagibbs:

light re-ACK 223588b

meshcollider:

Code review ACK 223588b1bbc63dc57098bbd0baa48635e0cc0b82

Tree-SHA512: 59bc52aeddbb769ed5f420d5d240d8137847ac821b588eb616b34461253510c1717d6a70bab8765631738747336ae06f45ba39603ccd17f483843e5ed9a90986

Introduce SetType function to tell ScriptPubKeyMans the type and internal-ness of it

Introduce DescriptorScriptPubKeyMan as a dummy class

Add WALLET_FLAG_DESCRIPTORS

Return nullptr from GetLegacyScriptPubKeyMan if descriptor wallet

Create LegacyScriptPubKeyMan when not a descriptor wallet

Introduce WalletDescriptor class

WalletDescriptor is a Descriptor with other wallet metadata

Add a lock cs_desc_man for DescriptorScriptPubKeyMan

Store WalletDescriptor in DescriptorScriptPubKeyMan

Implement SetType in DescriptorScriptPubKeyMan

Add LoadDescriptorScriptPubKeyMan and SetActiveScriptPubKeyMan to CWallet

Implement IsMine for DescriptorScriptPubKeyMan

Adds a set of scriptPubKeys that DescriptorScriptPubKeyMan tracks.

If the given script is in that set, it is considered ISMINE_SPENDABLE

Implement MarkUnusedAddresses in DescriptorScriptPubKeyMan

Implement IsHDEnabled in DescriptorScriptPubKeyMan

Implement GetID for DescriptorScriptPubKeyMan

Load the descriptor cache from the wallet file

Implement loading of keys for DescriptorScriptPubKeyMan

Add IsSingleType to Descriptors

IsSingleType will return whether the descriptor will give one or multiple scriptPubKeys

Implement several simple functions in DescriptorScriptPubKeyMan

Implements a bunch of one liners: UpgradeKeyMetadata, IsFirstRun, HavePrivateKeys,

KeypoolCountExternalKeys, GetKeypoolSize, GetTimeFirstKey, CanGetAddresses,

RewriteDB

Implement writing descriptorkeys, descriptorckeys, and descriptors to wallet file

Implement SetupGeneration for DescriptorScriptPubKeyMan

Implement TopUp in DescriptorScriptPubKeyMan

Implement GetNewDestination for DescriptorScriptPubKeyMan

Implement Unlock and Encrypt in DescriptorScriptPubKeyMan

Implement GetReservedDestination in DescriptorScriptPubKeyMan

Implement ReturnDestination in DescriptorScriptPubKeyMan

Implement GetKeypoolOldestTime and only display it if greater than 0

Implement GetSolvingProvider for DescriptorScriptPubKeyMan

Internally, a GetSigningProvider function is introduced which allows for

some private keys to be optionally included. This can be called with a

script as the argument (i.e. a scriptPubKey from our wallet when we are

signing) or with a pubkey. In order to know what index to expand the

private keys for that pubkey, we need to also cache all of the pubkeys

involved when we expand the descriptor. So SetCache and TopUp are

updated to do this too.